Insights

Using AI in Creative Technology

Oct18

Shared By Gian Pablo Villamil

One of the most important technology trends right now is the growth in Artificial Intelligence (AI) in many areas related to human interaction. As a creative technology firm that produces immersive experiences, the team at Britelite has looked ahead to how AI can be applied.

AI has implications beyond traditional applications in design and can be used to enhance interactions by sensing how people are using an installation and adapting. AI can also help create unique media and content for experiences. Finally, AI can be used to build intelligent connections by analyzing data and proposing solutions. Deploying AR into an installation can create a much more impactful and useful production.

Sensing people

One of the most powerful ways to use AI in interactive installations is in sensing how people are interacting. By applying AI to audio, video, touch and gesture input, we can create installations that transcend the limitations of screens and conventional input devices.

Voice

Voice is a very natural way for people to interact, and tools are increasingly available to allow voice input in a number of different scenarios.

Two systems that we have used are Google’s voice API, and Amazon’s Alexa system. They allow for installations to easily receive voice commands, and tap into extensive network resources to address a user’s needs.

Sentiment

Even if someone is not talking to you or interacting, it can be helpful to know what they’re doing and how they are feeling. There are a number of great ways to do this.

Google AIY Video Kit

We’ve experimented with this extremely inexpensive system, and are very impressed. It has a dedicated vision processing circuit coupled with an inexpensive computer. It can easily detect whether people it can see are happy or sad, and responds to changing the color of a light. It can do object classification. However, we’ve noticed that it is far better at identifying dogs than it is at identifying furniture.

Amazon Deeplens

The Deeplens is far more sophisticated, it is a network connected video camera that can recognize activities. It ties into Amazon’s extensive set of cloud services and can do exciting things like identifying whether your food is a hot dog or not, and more interestingly, what kinds of activities people are engaged in.

Gesture

Interacting with people by identifying their gestures can be hugely rewarding. In large scale interactives, engaging with people by encouraging them to move their bodies can be a lot of fun.

We’ve worked with a couple of systems that learn to identify gestures by actually studying how real people move. A key challenge is trying to separate meaningful gestures that try to achieve specific action from generally reacting to motion. The good news is that careful design can mitigate issues.

Making art/content

An especially exciting way to apply AI in experience design is to use it to help create highly original interactive artwork.

Images

Google’s Deepdream neural network has gotten a lot of attention recently for its highly surreal and original imagery. As more and more artists and designers have used it, it’s become an interesting tool for achieving really interesting effects.

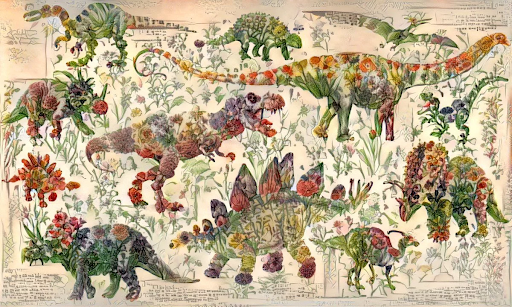

One very interesting technique is “style transfer” where the look of one image is transferred to the content of another. A really popular example is one where a children’s book about dinosaurs was combined with illustrations of flowers to create a rather intriguing set of images.

Another fascinating use of AI to create AI imagery is the website thispersondoesnotexist.com, where two competing AI systems have created realistic photos of people who… do not exist.

Music

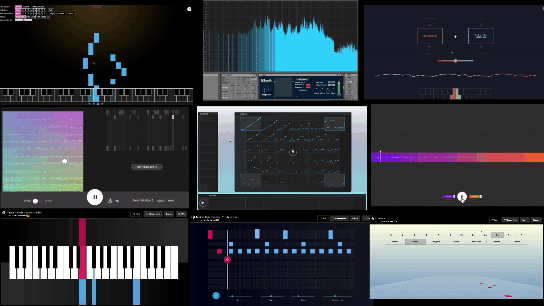

Music is a great field to apply AI. Google has applied their Tensorflow system to a set of tools for aiding in music performance, called Magenta.

Magenta includes tools for making entirely new types of sound, as well as applications that can improvise musical works given a small set of notes

“A primary goal of the Magenta project is to demonstrate that machine learning can be used to enable and enhance the creative potential of all people.

The demos and apps listed on this page illustrate the work of many people--both inside and outside of Google--to build fun toys, creative applications, research notebooks, and professional-grade tools that will benefit a wide range of users.”

Music can be generated automatically, either from scratch or based on input from users who can be non-musicians.

Video

AI systems can generate interesting video experiences: an example is the Poetic AI exhibition by Ouchhh at Atelier des Lumieres. Of course some results can be a bit… strange.

We recently had a fascinating artist talk by Gary Boodhoo who is using neural networks and AI to generate amazing interactive artworks...

Generative Design

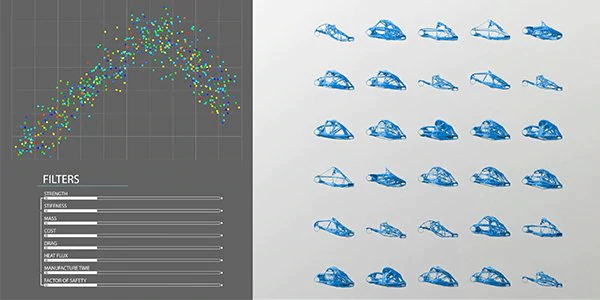

Another fascinating application of AI is in what is called “generative design” - given a series of input parameters related to the function of a thing, a neural network can generate multiple different design options that can support that function.

Creative Tools

In addition to directly producing interesting/original output, AI tools can also support creative artists in their process. We’re already seeing some interesting examples.

Video editing

Adobe’s new feature, Content Aware fill for Video, relies on AI to erase objects from videos, and intelligently fill in the cleared area by looking at other frames.

Remove.bg is another example of a creative tool driven by AI - it analyzes a picture to identify people and products, and removes the background, with no further intervention by the user.

Where to next?

Britelite is unusual among creative technology agencies in having both a top-notch creative team and a rigorous engineering team under one roof, combined with extensive experience in creating memorable experiences for people. Over the past years, we’ve developed a great deal of expertise in the user experience, software and hardware components that would be required to use AI in the experiential space.

Britelite Immersive is a creative technology company that builds experiences for physical, virtual, and online realities. Read more about our capabilities or view our work.